Public note

VibeVoice: Why Microsoft’s Open-Source Voice AI Project Matters

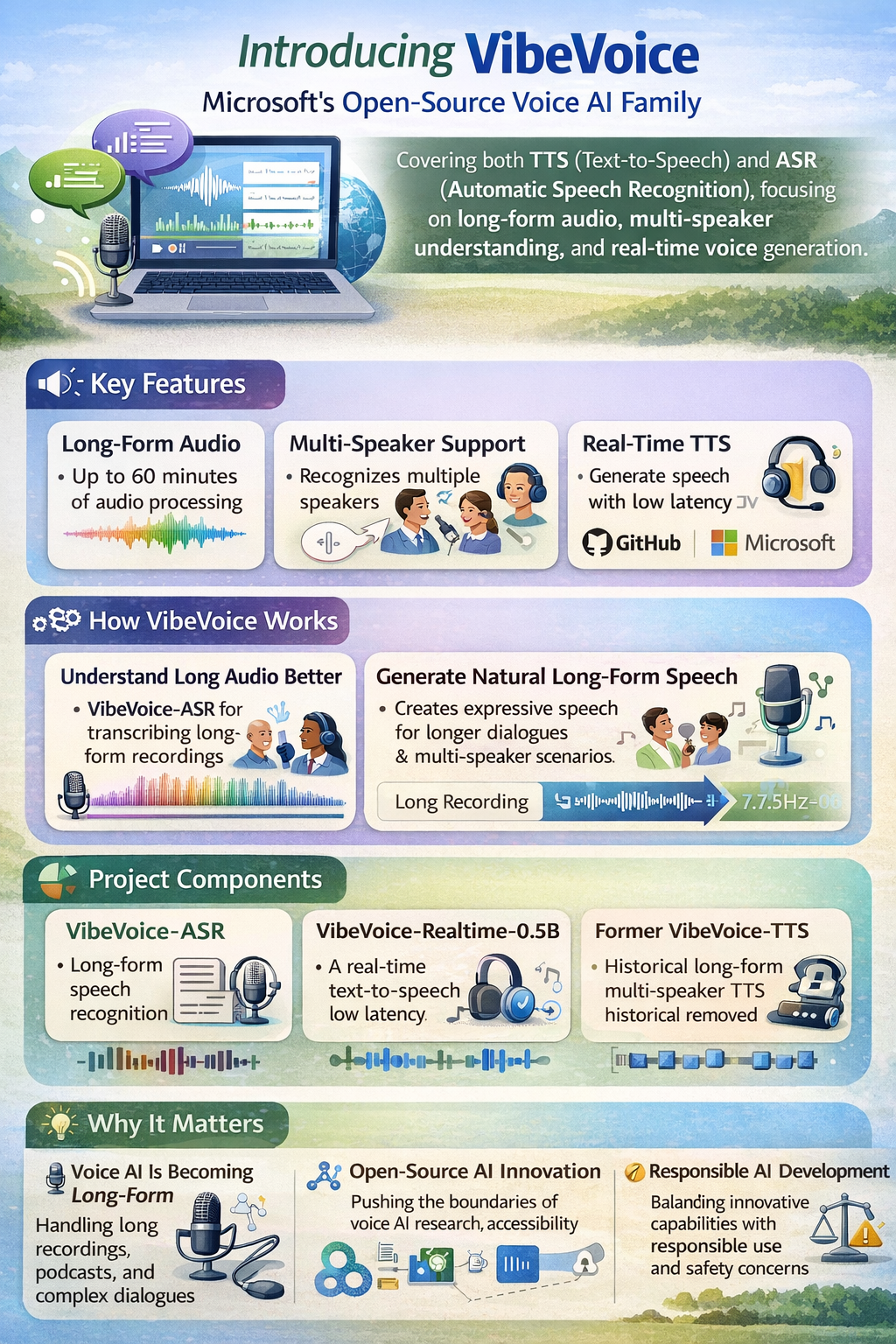

Microsoft's VibeVoice is an advanced open-source voice AI family focusing on long-form audio tasks including multi-speaker speech recognition and real-time text-to-speech generation.

Summary

VibeVoice is Microsoft’s open-source voice AI family covering both text-to-speech (TTS) and automatic speech recognition (ASR). The project is interesting not just because it generates or transcribes speech, but because it focuses on long-form audio, multi-speaker understanding, and real-time voice generation.

For people interested in IT, the easiest way to think about VibeVoice is this:

It is an example of how voice AI is moving beyond short voice clips and simple assistants toward more realistic, scalable, and conversation-aware audio systems.

That matters because voice is becoming a bigger part of how people interact with software, devices, and AI services.

What the Project Is

The GitHub repository describes VibeVoice as “Open-Source Frontier Voice AI.” Today, the repo presents the project as a family of models that includes:

- VibeVoice-ASR for long-form speech recognition

- VibeVoice-Realtime-0.5B for real-time text-to-speech

- a historical VibeVoice-TTS long-form multi-speaker TTS project whose code was later removed from the repository

In plain English, this means VibeVoice is not just one model. It is a broader voice-AI effort aimed at handling speech generation and speech understanding at a much larger scale than traditional short-form tools.

This makes it relevant to:

- people following AI assistants

- anyone interested in voice interfaces

- IT readers tracking open-source AI

- teams thinking about podcasts, captions, transcription, or spoken interaction

Why VibeVoice Matters

Many voice systems work well for short commands, short dictation, or short generated clips. VibeVoice stands out because it focuses on longer and more complex audio tasks.

That includes things like:

- generating long-form spoken audio

- handling multiple speakers

- recognizing who said what and when

- supporting real-time voice output

- working across multiple languages

For everyday IT readers, the bigger story is simple:

Voice AI is becoming more usable for real-world conversations, long recordings, and richer media workflows.

That is a major step beyond the early generation of voice tools, which often struggled with consistency over longer sessions.

What the Project Currently Includes

1. VibeVoice-ASR

The repository currently highlights VibeVoice-ASR as a long-form speech-recognition model. According to the README, it can process up to 60 minutes of audio in a single pass and produce structured transcripts that capture:

- Who spoke

- When they spoke

- What they said

The project also says users can add customized hotwords such as names, technical terms, or domain-specific context to improve recognition accuracy.

This is important because it makes VibeVoice-ASR more than a basic speech-to-text tool. It is trying to combine:

- transcription

- speaker diarization

- timestamping

- contextual guidance

In January 2026, Microsoft open-sourced VibeVoice-ASR, and in March 2026 the repository announced that the model had become part of a Transformers release, which makes it easier to integrate into AI workflows.

2. VibeVoice-Realtime-0.5B

The repo also includes VibeVoice-Realtime-0.5B, a lightweight real-time TTS model.

According to the README, this model is designed for:

- streaming text input

- around 300 milliseconds first audible latency

- about 10 minutes of robust long-form speech generation

- a smaller 0.5B parameter footprint aimed at easier deployment

This is interesting for IT readers because it shows another major direction in AI: users increasingly expect systems to speak back quickly, not just generate a final result after a long wait.

That makes real-time voice generation useful for:

- interactive assistants

- live reading tools

- voice interfaces

- accessibility features

- experimental conversational products

3. The Original VibeVoice-TTS Story

One of the most important parts of the VibeVoice story is also the most unusual.

Microsoft originally open-sourced VibeVoice-TTS in August 2025 as a long-form multi-speaker text-to-speech model that the project page described as able to synthesize up to 90 minutes of audio with up to 4 speakers.

However, the repository later announced that the VibeVoice-TTS code was removed after Microsoft discovered uses that were inconsistent with the project’s stated intent. The public project page says the repo was disabled at that time, and the GitHub README now notes that the TTS code has been removed while keeping the broader framework and newer models visible.

This matters because it shows that modern AI projects are no longer only technical stories. They are also governance and responsible-use stories.

How It Works in Simple Terms

A beginner-friendly way to understand VibeVoice is as a voice AI stack with three main ideas.

1. Understand long audio better

Instead of breaking everything into very small chunks, VibeVoice-ASR is designed to process very long recordings in one pass, which helps preserve context.

2. Generate more natural long-form speech

The TTS side of the project focuses on conversational flow, multi-speaker support, and expressive speech.

3. Use more efficient speech tokens

The repository says a key innovation is the use of continuous speech tokenizers operating at 7.5 Hz, which aim to preserve audio quality while improving efficiency for long sequences.

The README also says VibeVoice uses a next-token diffusion design: a large language model helps track text context and dialogue flow, while a diffusion head generates high-fidelity acoustic details.

For non-specialists, the core message is this:

VibeVoice is trying to make voice AI both longer-lasting and more coherent, without losing audio quality.

Why This Is Interesting for People in IT

Even if you are not a machine-learning engineer, VibeVoice is still worth paying attention to because it captures several important trends.

Voice AI is becoming long-form

Short voice commands are old news. The more interesting challenge is handling interviews, meetings, podcasts, lectures, and long dialogues.

AI products are becoming multimodal by default

Typing is no longer enough. The future of software includes speaking, listening, and responding naturally.

Open-source AI keeps pushing the frontier

VibeVoice shows that some of the most advanced work in speech AI is available for public research and experimentation, not only inside closed commercial platforms.

Safety and misuse are now part of the product story

The removal of the original TTS code is a reminder that high-quality voice generation raises real concerns around misuse, impersonation, and trust.

Strengths

- Strong focus on long-form voice tasks

- Covers both speech recognition and speech generation

- Includes structured transcription with speaker and timestamp awareness

- Supports multilingual scenarios

- Real-time TTS option broadens the project’s practical appeal

- Public traction is very strong, suggesting wide interest from the open-source community

- Backed by Microsoft, which gives the project visibility and research weight

Caveats

A balanced report should also mention the limits.

1. The project is not a consumer app

VibeVoice is an open-source AI project, not a simple everyday end-user product.

2. Some of the most headline-grabbing TTS work is no longer fully available in repo code

The original long-form multi-speaker TTS code was removed after misuse concerns.

3. Microsoft explicitly warns about risks and limitations

The README says the models may produce unexpected, biased, or inaccurate output, warns about deepfake and disinformation risks, and says the models are intended for research and development purposes only.

4. It is not recommended for commercial or real-world deployment without more testing

The repository explicitly says Microsoft does not recommend using VibeVoice in commercial or real-world applications without further testing and development.

Current Momentum

VibeVoice has strong public traction on GitHub. At the time of writing, the repository shows roughly:

- 27k stars

- 3k forks

- 97 commits

- an MIT license

The public repository also shows recent momentum in 2026, including:

- January 21, 2026: open-sourcing of VibeVoice-ASR

- March 6, 2026: announcement that VibeVoice-ASR is part of a Transformers release

- March 29, 2026: announcement that the open-source project Vibing is using VibeVoice-ASR as the base of a voice-powered input method

That suggests VibeVoice is not standing still. It is being adopted, integrated, and extended.

Why This Project Is Good News

The good news about VibeVoice is not just that it is popular. The bigger story is that it makes advanced voice AI easier to study and understand.

For IT readers, it shows that the future of AI is not only about text chat. It is also about:

- long-form listening

- speaker-aware transcription

- expressive voice generation

- real-time spoken interaction

- safer and more responsible release practices

That combination makes VibeVoice one of the more interesting open-source voice projects to watch.

Conclusion

VibeVoice is best understood as a high-ambition voice AI project that shows both the promise and the tension of modern generative technology.

In the simplest terms:

It is Microsoft’s attempt to push open-source voice AI toward longer, smarter, and more realistic speech workflows.

That makes it relevant not only to developers, but to anyone trying to understand where AI interfaces are going next.

The project is impressive because of what it can do.

It is important because of what it reveals about the future of voice, multimodal AI, and responsible open-source release strategy.