Public note

Onyx Report: Understanding the Project Through Its Deep Research Benchmark Work

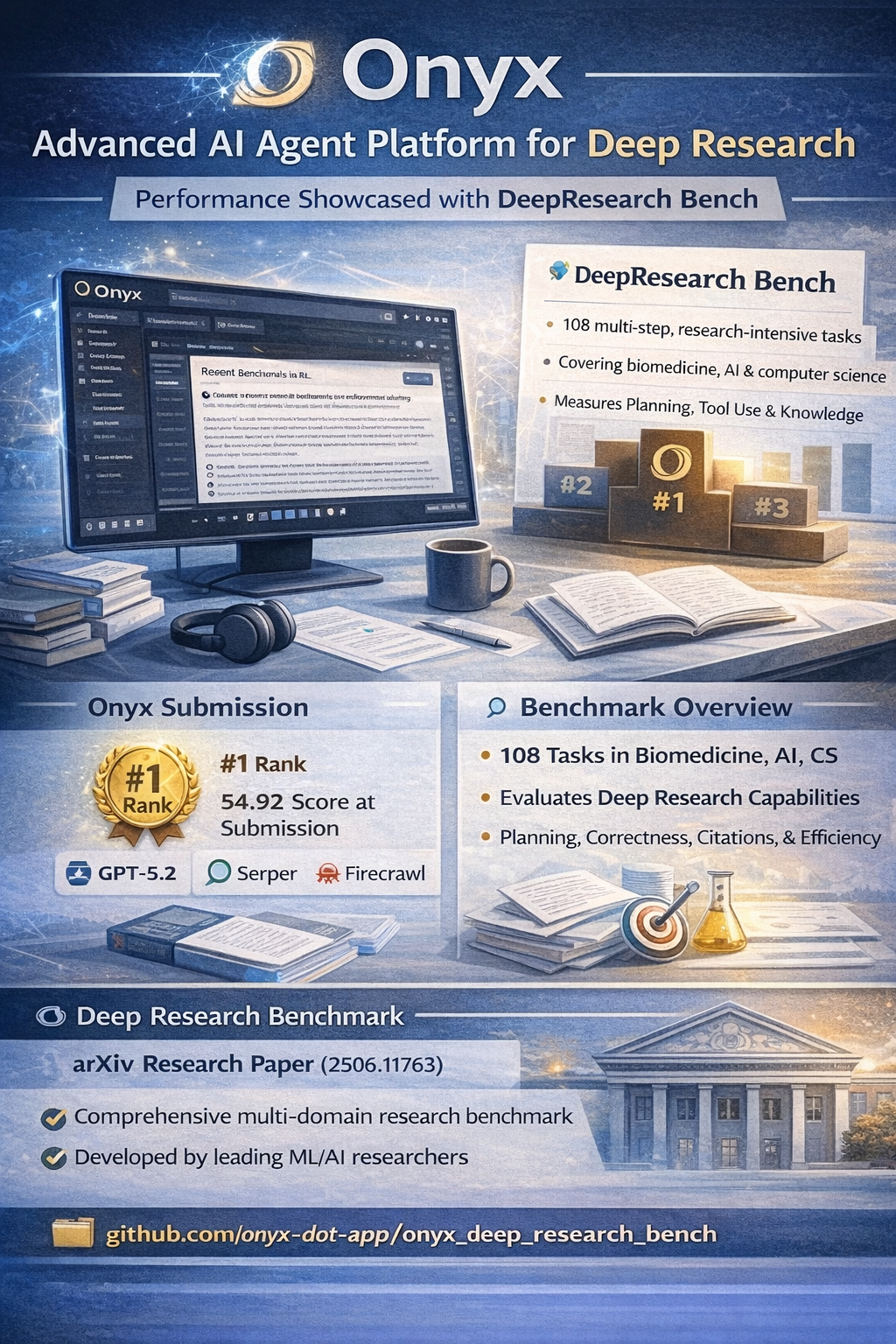

Onyx is an open-source AI platform featuring deep research capabilities, as evidenced by its submission to the DeepResearch Bench benchmark. The benchmark evaluates high-complexity tasks and highlights Onyx's performance in generating comprehensive, citation-rich research reports.

Summary

Onyx is best understood as an open-source AI platform for teams, not just a chat interface. The main GitHub repository presents it as an “application layer for LLMs” with capabilities such as RAG, web search, code execution, file creation, custom agents, and deep research. In the product docs, Onyx is described as a system that can connect to internal knowledge, applications, and the web, then expose those capabilities through multiple surfaces such as the web app, desktop app, Slack, browser extension, CLI, and MCP integrations.

However, if you want the clearest way to evaluate what makes Onyx stand out technically, the most useful lens is not the product homepage. It is the combination of:

- the

onyx_deep_research_benchrepository, which shows how the Onyx team submitted its system to a public deep-research benchmark, and - the paper “DeepResearch Bench: A Comprehensive Benchmark for Deep Research Agents” (arXiv:2506.11763), which explains what that benchmark is actually trying to measure.

That combination matters because it shifts the conversation from generic platform claims to a harder question:

Can Onyx produce strong, citation-rich, long-form research reports under a public evaluation framework?

The answer, based on Onyx’s benchmark submission, is that deep research is not a side feature for Onyx. It is one of the most serious and externally visible capabilities in the project.

What Onyx Is

The main Onyx repository describes the project as “The Open Source AI Platform” and “the application layer for LLMs.” It says Onyx supports advanced capabilities including RAG, web search, code execution, file creation, and deep research, and it can connect to 50+ indexing-based connectors out of the box or through MCP.

In practical terms, this means Onyx is designed to sit between foundation models and the messy reality of team knowledge and workflows. Instead of giving users a generic chatbot that only knows public information, Onyx tries to provide a secure, self-hostable platform that can combine:

- internal documents and apps

- live web information

- agent-like workflows

- actions and tool use

- multiple interfaces for different users

The docs also make clear that Deep Research is part of the platform’s core experience. In the chat documentation, Onyx says users can enable a Deep Research mode that lets the system run many cycles of thinking, research, and actions to answer more difficult questions. It warns that this mode may take several minutes and can cost more than 10× the tokens of a normal inference, which is a useful signal that Onyx treats deep research as a heavier but more capable operating mode rather than a cosmetic toggle.

That framing is important. Onyx is not merely saying, “We have search.” It is saying, “We have a more expensive, longer-running, multi-step research flow intended for complicated tasks.”

Why the Benchmark Matters More Than Marketing

A lot of AI products now claim they can do “deep research.” The phrase is becoming vague. What makes Onyx more interesting is that the team did not stop at product copy. It published a dedicated repository, onyx_deep_research_bench, for its benchmark submission.

That submission repo matters because it shows three things at once:

1. Onyx treated deep research as a measurable system capability

The repo is explicitly labeled as Onyx’s submission to Deep Research Benchmark. It includes the benchmark-format result files, raw and cleaned outputs, per-report metric files, and a directory of research-agent task logs.

That is more meaningful than a demo. It means the Onyx team was willing to have its system judged in a structured public evaluation setting.

2. The benchmark result is clearly described as a system result, not just a model result

The submission repo explains that the evaluated reports were produced with a nightly build of Onyx and relied on a specific external stack:

- LLM: GPT-5.2

- Web search API: Serper

- Web crawler: Firecrawl

It also notes that the same Deep Research flow can be run with other LLMs and search/crawling APIs, but results will vary depending on setup.

This is one of the most important points in the whole story. The submission is not saying “Onyx beat everything because of one secret model.” It is showing that the benchmark result reflects a full research system configuration: product orchestration, prompting, retrieval, crawling, answer synthesis, and user-experience constraints.

3. The repo exposes the practical product constraints behind the score

The submission repo says Onyx is a production system with user-experience constraints, including:

- a 30-minute maximum for all research and answer generation on a question

- report outputs typically around 10,000 tokens, though sometimes over 20,000

- a maximum 2-minute gap between user-facing outputs

That is highly relevant for anyone trying to understand the real product. Onyx did not optimize purely for abstract benchmark output. It says the system was tuned with usability, latency, and concision in mind.

That makes the result more meaningful than a lab-only number.

What DeepResearch Bench Measures

The paper “DeepResearch Bench: A Comprehensive Benchmark for Deep Research Agents” is important because it explains what kind of task Onyx was being tested on.

According to the paper abstract and the benchmark repository:

- the benchmark contains 100 PhD-level research tasks

- the tasks were created by domain experts across 22 distinct fields

- the benchmark is designed specifically for Deep Research Agents (DRAs)

- the authors propose two evaluation approaches:

- a reference-based, adaptive-criteria method for evaluating research-report quality

- a second framework for evaluating information retrieval and collection quality, including effective citation count and citation accuracy

The benchmark repository goes further and names the report-quality framework RACE (Reference-based Adaptive Criteria-driven Evaluation). It says RACE evaluates reports across four main dimensions:

- Comprehensiveness

- Insight / Depth

- Instruction-Following

- Readability

This is why the paper matters so much for understanding Onyx.

It tells us that DeepResearch Bench is not a simple factual QA benchmark. It is trying to evaluate whether an agent can produce something closer to an analyst-grade, citation-rich research report. That is a harder and more realistic target than “can it answer one question correctly.”

For a project like Onyx, this is exactly the right kind of external evaluation. Onyx is not trying to be a toy assistant. It is trying to be a system that can carry out serious multi-step research.

How Onyx Performed

The Onyx submission repository includes a benchmark table in its README. In that submission snapshot, Onyx reports the following result:

- Rank: 1

- Overall: 54.92

- Comprehensiveness: 55.07

- Insight: 57.10

- Instruction Following: 52.99

- Readability: 52.41

That score matters less as a bragging number than as a signal of how Onyx is positioned technically.

The standout point in the submission table is Insight (57.10). If you are trying to evaluate whether a research agent can do more than collect facts, that number is important. It suggests the system was especially strong at analysis and synthesis, not only retrieval.

At the same time, it is also important to keep perspective. The broader DeepResearch Bench ecosystem continued evolving after the Onyx submission snapshot. The benchmark repository’s news feed shows that many new systems were added in March and April 2026, and the public leaderboard kept moving. In other words, Onyx’s “rank 1” claim should be read as a submission-time snapshot, not as a permanent universal ranking.

That nuance makes the result more credible, not less.

What This Tells Us About the Main Onyx Project

When you connect the main Onyx repo, the benchmark submission repo, and the benchmark paper, a clearer picture emerges.

Deep research is a flagship capability, not a side feature

The main repo advertises deep research in the feature list. The docs expose it in the chat interface and API. The deployment docs say self-hosted open-source users get access to Deep Research alongside Chat, Agents, Actions, and Connectors. And the benchmark submission repo shows that the team invested in public evaluation of this capability.

That means deep research is one of the best ways to understand what Onyx wants to be.

Onyx is trying to bridge product UX and research-agent performance

Many research-agent projects optimize for maximum output quality without caring much about usability. Many enterprise AI platforms optimize for usability but do not show much public evidence that their long-form reasoning is competitive.

Onyx is interesting because its submission repo explicitly talks about latency caps, report length constraints, and human usability, while still participating in a benchmark that measures long-form report quality.

That combination is rare.

The benchmark result should be interpreted as a platform-system result

Because the submission used GPT-5.2, Serper, and Firecrawl, the benchmark result reflects how well Onyx as an orchestration/product system performs when configured with a high-quality external stack. This is actually a strength for the report, because Onyx is a platform. The platform’s value lies in how well it organizes models, retrieval, tools, and UX into one working product.

Caveats and Limits

A balanced report should also be clear about what the benchmark does not prove.

1. The benchmark does not evaluate the whole Onyx platform

Onyx also includes connectors, agents, code execution, artifacts, voice mode, browser extensions, and deployment workflows. DeepResearch Bench is valuable, but it only measures one slice of the product: deep-research report quality and citation-related retrieval quality.

2. The score depends on system configuration

The Onyx submission used a specific stack. A different model, web search provider, or crawler could change the result.

3. Benchmark snapshots age quickly

The benchmark leaderboard is active and keeps adding new systems. A strong score remains meaningful, but rankings move.

4. Deep research is expensive by design

Onyx’s own docs say Deep Research may take several minutes and cost more than 10× a normal inference. That means it is a premium capability from a compute and product-design perspective, not something every query should use.

Bottom Line

If you want the simplest and most useful way to evaluate Onyx, do not start with the connector count or the chat UI.

Start here instead:

- the main Onyx project says deep research is one of its core capabilities

- the

onyx_deep_research_benchrepo shows the team was willing to submit that capability to public evaluation - the DeepResearch Bench paper explains that the evaluation is aimed at high-difficulty, long-form, citation-rich research tasks rather than shallow question answering

That combination leads to a clear conclusion:

Onyx is not just an enterprise chat platform with a search box. It is trying to become a serious deep-research system inside a broader open-source AI platform.

That is what makes the project worth paying attention to.

Its benchmark performance does not mean it has solved enterprise AI. But it does show that one of Onyx’s most ambitious claims — deep, multi-step research — has been tested in a serious external framework and performed strongly enough to matter.

Sources

- Onyx main repository: https://github.com/onyx-dot-app/onyx

- Onyx releases: https://github.com/onyx-dot-app/onyx/releases

- Onyx benchmark submission repository: https://github.com/onyx-dot-app/onyx_deep_research_bench

- Onyx documentation welcome page: https://docs.onyx.app/welcome

- Onyx chat / deep research docs: https://docs.onyx.app/overview/core_features/chat

- Onyx deployment overview: https://docs.onyx.app/deployment/overview

- DeepResearch Bench paper: https://arxiv.org/abs/2506.11763

- DeepResearch Bench repository: https://github.com/Ayanami0730/deep_research_bench