Public note

Goose Wants to Be the Open Agent Runtime for Developers — Not Just Another Coding Assistant

Subheadline

Goose is an open-source AI agent project that runs locally as a desktop app, CLI, and API, while connecting to outside tools through open standards like MCP and ACP. What makes it notable is not just model support, but its attempt to package workflows, safety controls, and extensibility into one native agent platform.

Lead

Open-source AI agents are no longer content to behave like chat windows with a few extra buttons. Goose is part of a newer wave of projects trying to become a real execution layer: software that can plan, call tools, manage context, automate workflows, and still remain portable enough to run on a developer’s own machine.

That is why Goose is worth watching. The project is not pitching itself merely as a code helper. It is positioning itself as a general-purpose local AI agent for code, research, automation, writing, and data work, delivered across a desktop app, terminal interface, and embeddable API. In a market crowded with increasingly similar “AI coding agents,” Goose is trying to differentiate itself through openness, standards support, reusable recipes, and a more explicit security model.

At a Glance

- Goose is an open-source AI agent with desktop, CLI, and API interfaces.

- It works with 15+ model providers and can also use subscription-based coding agents through ACP.

- The project supports 70+ documented MCP extensions, making it unusually tool-centric.

- Goose now sits under the Agentic AI Foundation at the Linux Foundation, a governance move aimed at vendor neutrality.

- Built-in features such as recipes, subagents, permissions, sandboxing, and adversary review push it beyond the “chat with tools” baseline.

- The GitHub repository shows strong momentum, including 41k+ stars and a latest release tagged v1.30.0 on April 8, 2026.

What Happened

Goose has matured from a promising open-source agent into a broader platform story. Its GitHub repository now describes it as a “native open source AI agent” for code, workflows, and everything in between, while the official site emphasizes a wider product surface: desktop app, CLI, API, recipes, MCP apps, subagents, and security tooling.

The project’s recent identity shift matters too. Goose has moved from block/goose to aaif-goose/goose and is now part of the Agentic AI Foundation (AAIF) at the Linux Foundation. That is not just a branding footnote. In today’s agent market, where many tools are deeply tied to one vendor or model provider, governance and neutrality are becoming part of the product pitch.

Goose is also shipping quickly. The public GitHub releases page lists 127 releases, with v1.30.0 marked as the latest release on April 8, 2026.

Key Facts / Comparison

| Category | What Goose Offers | Why It Matters |

|---|---|---|

| Interfaces | Desktop app, CLI, API | Lets the same agent runtime fit interactive, terminal, and embedded use cases |

| Model access | 15+ providers plus ACP-compatible coding agents | Reduces lock-in and broadens deployment choices |

| Tool ecosystem | 70+ MCP extensions | Makes Goose more useful as an execution environment, not just a chat UI |

| Workflow reuse | Recipes and subrecipes | Turns one-off prompts into repeatable automation |

| Parallelism | Subagents and parallel subrecipes | Suggests Goose is moving toward orchestrated agent work, not single-thread chat |

| UI layer | MCP Apps / MCP-UI support in Desktop | Gives extensions a richer interface than plain text responses |

| Security | Prompt injection detection, permission controls, sandboxing, adversary mode | Addresses one of the biggest trust gaps in agent software |

| Governance | AAIF / Linux Foundation | Strengthens the project’s long-term open-source credibility |

Background and Context

The rise of agent software has created a strange split in the market. Many commercial products are polished but closed. Many open-source agents are flexible but fragmented, often feeling like bundles of scripts, prompt templates, and integrations rather than coherent products.

Goose is trying to close that gap. It keeps the openness and composability of the open-source ecosystem, but wraps them in a more productized structure. The official documentation describes Goose as a local agent that can connect to tools and data through Model Context Protocol (MCP), while also acting as an ACP server and using ACP agents such as Claude Code or Codex as providers.

That dual-protocol stance is one of Goose’s most interesting strategic choices. MCP handles tools and data connections. ACP handles agent-to-agent or client-to-agent interoperability. Together, they push Goose toward the role of an agent hub rather than a standalone assistant.

Why This Matters

Goose matters because it reflects several larger shifts in AI tooling.

First, the winning agent products may not be the ones with the most impressive model demos. They may be the ones that make tool use, workflow reuse, and governance easier to manage in everyday work.

Second, Goose shows how quickly the center of gravity is moving from “AI for code completion” toward AI for operational execution. A system that can edit files, run shell commands, manage projects, call external services, and save reusable task recipes is much closer to a software workbench than to a classic chatbot.

Third, the project is a reminder that open standards are becoming competitive weapons. MCP and ACP are not side notes here; they are central to Goose’s value proposition.

For different audiences, that has different implications:

- Developers get a local agent that can work in terminal-heavy environments and across multiple model providers.

- Teams get reusable recipes and the possibility of standardizing agent workflows.

- Platform builders get an API surface and extensibility story that can be embedded into other products.

- Security-conscious users get more visible controls than many “autonomous agent” demos tend to offer.

Insight and Industry Analysis

The biggest insight from Goose is that the most important contest in AI agents may no longer be about raw model intelligence alone. It may be about runtime design.

Goose is effectively betting that the future agent stack needs six things at once: local execution, model optionality, protocol-based extensibility, reusable workflows, richer interfaces, and explicit safety controls. That is a more ambitious thesis than “we are an AI coding assistant.”

There is also a broader industry signal here. Goose’s integration of MCP, ACP, MCP Apps, recipes, and subagents points toward a world in which AI products resemble operating environments more than standalone assistants. In that model, the model itself becomes only one layer. The higher-value layer is the one that organizes tools, memory, permissions, orchestration, and UX.

That does not guarantee Goose will dominate. But it does mean the project is addressing the right problem: how to make agents usable and governable outside of toy demos.

Pros and Cons

Pros

- Broad interface coverage across desktop, CLI, and API

- Strong protocol strategy with both MCP and ACP support

- Large extension surface with 70+ documented integrations

- Reusable workflows through recipes and subrecipes

- Visible security model including adversary review and sandboxing

- Vendor-neutral governance story under AAIF / Linux Foundation

- High open-source traction and active release cadence

Cons

- Product scope is getting wide, which can make the platform harder to understand for newcomers

- Some advanced capabilities are uneven across interfaces, such as projects being CLI-only today

- Autonomous features still raise trust questions, even with safety controls in place

- The standards ecosystem is still evolving, so some integrations and behaviors may shift over time

- Operational complexity grows with power, especially once users start combining recipes, extensions, and headless execution

Technical Deep Dive

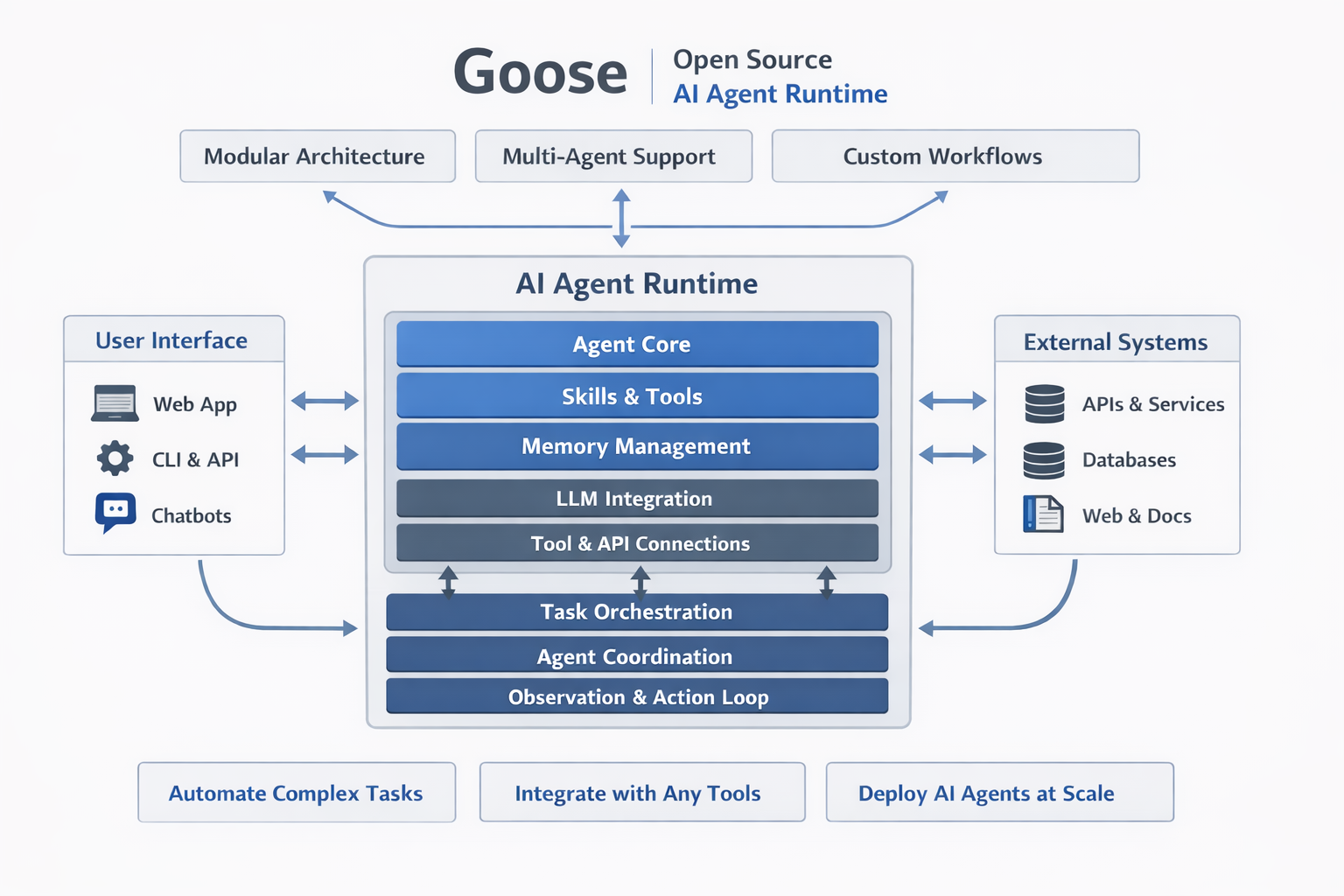

At a technical level, Goose is best understood as a layered agent runtime.

Core Architecture

The documentation describes Goose as building on the basic “text in, text out” behavior of LLMs, then extending it through tool integrations. Its Extensions framework treats each extension as a component that exposes tools and maintains state through a unified interface.

That matters because it creates a cleaner internal model for agent capabilities:

- the model reasons in natural language

- extensions expose callable tools

- workflows package prompts, parameters, and tool access

- the runtime decides how to execute tasks across interactive or automated modes

Built-In Tooling and Workflow Model

A few technical building blocks stand out:

| Technical element | What it does | Why it matters |

|---|---|---|

| Developer extension | Handles file editing, shell commands, project setup, code editing, and codebase analysis | Gives Goose a serious default capability set out of the box |

| Recipes | Package prompts, extensions, parameters, and subrecipes into reusable YAML workflows | Turns ad hoc sessions into portable automations |

| Subagents | Spawn isolated worker instances for delegated tasks | Helps with context separation and parallel work |

| Headless mode | Runs tasks non-interactively and exits automatically | Makes Goose viable for CI/CD, servers, and scheduled automation |

| Projects | Tracks working directories, last instructions, and session IDs | Adds continuity for CLI workflows across codebases |

| MCP Apps | Lets extensions render rich UI components inside Goose Desktop | Moves beyond plain-text agent responses |

Safety and Control Stack

Goose is unusually explicit about agent safety for an open-source tool.

Key protections documented publicly include:

- prompt injection detection

- tool permission controls

- sandbox mode on macOS with filesystem and outbound connection restrictions

- adversary mode, where a separate reviewer agent checks tool calls before execution

The adversary reviewer is especially notable because it is designed to inspect whether a tool call actually matches the user’s intent, not just whether it triggers a simple static rule. That is a more advanced design than the basic allow/deny prompts many local agents still rely on.

Where Goose Fits Technically

Goose is not merely a wrapper around one model API. It is closer to a local-first orchestration runtime for agentic work. The architecture increasingly looks like this:

- User surface: Desktop, CLI, API, or ACP client

- Reasoning layer: Chosen model/provider or ACP agent

- Execution layer: Extensions and built-in developer tooling

- Workflow layer: Recipes, subrecipes, headless tasks, project state

- Safety layer: Permissions, detection, sandboxing, adversary review

- Interface layer: Text UI or richer MCP Apps inside Desktop

That stack is exactly why Goose feels more substantial than a simple open-source copilot.

What to Watch Next

- Whether Goose can keep the product coherent as its feature set expands

- How strongly the AAIF / Linux Foundation move translates into ecosystem trust and outside contributions

- Whether MCP Apps become a meaningful differentiator versus text-only agent environments

- How well Goose’s security model holds up as more users run higher-autonomy workflows

- Whether recipes and headless execution push Goose into enterprise and CI-heavy use cases

Conclusion

Goose is becoming one of the more interesting open-source agent platforms because it is solving for the whole runtime, not just the model connection. Its mix of local execution, open standards, workflow packaging, and safety controls makes it feel like infrastructure for agentic work rather than a flashy chatbot wrapper.

That does not make it simple. In fact, Goose’s biggest challenge may be that it is trying to do many hard things at once. But the ambition is exactly what makes it worth attention. If the next phase of AI software is about turning models into dependable working systems, Goose is building in the right direction.