Public note

Google Launches Gemma 4, Pushing Open Multimodal AI From Phones to Workstations

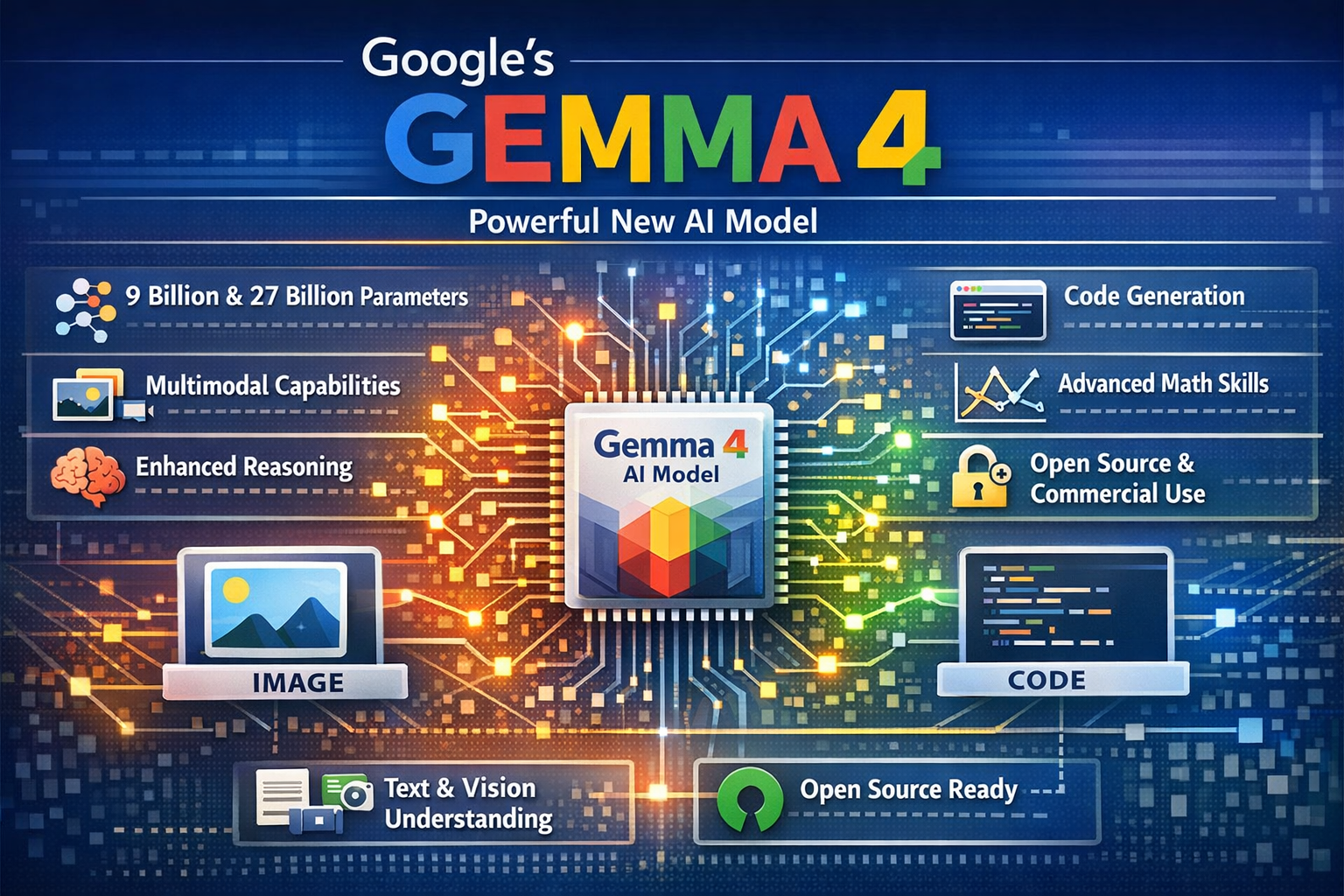

Google launched Gemma 4, a family of Apache 2.0-licensed multimodal AI models designed for deployment from mobile devices to workstations, aiming to balance efficiency with capability across various hardware.

Subheadline

Google DeepMind has unveiled Gemma 4, a new family of Apache 2.0-licensed open models designed to span everything from mobile and edge devices to local workstation deployments. The release matters because it pairs long-context, multimodal AI with a broad open-source rollout across Hugging Face, Ollama, transformers, llama.cpp, MLX, and browser-based tooling.

Lead

Google is making a bigger play for the open-model market with Gemma 4, a new generation of multimodal models that aim to deliver more reasoning power per parameter while remaining practical to run on local hardware. Rather than shipping a single flagship model, the company is dividing the lineup into smaller “effective” edge models and larger workstation-oriented variants, a strategy that reflects how AI deployment is fragmenting across phones, PCs, and cloud-connected developer tools.

The pitch is straightforward: developers should not have to choose between capable multimodal models and deployability. With Gemma 4, Google is trying to make the case that open models can be both more useful and easier to place wherever they are needed, whether that means an offline mobile app, a coding assistant on a laptop, or an agentic workflow running from a local server.

At a Glance

- Google announced Gemma 4 on April 2, 2026, positioning it as its most capable open model family so far.

- The lineup includes E2B, E4B, 26B A4B MoE, and 31B Dense variants.

- Smaller models support text, image, and audio input; larger models support text and image.

- Context windows stretch to 128K tokens on edge models and 256K tokens on larger models.

- Google says Gemma 4 is released under an Apache 2.0 license, with weights available through Hugging Face, Ollama, Kaggle, LM Studio, and Docker.

- The company says Gemma has now surpassed 400 million downloads since the first generation, with more than 100,000 community variants in circulation.

What Happened

Google DeepMind and Google’s developer teams launched Gemma 4 as the next major step in the company’s open-model strategy. According to Google’s announcement, the new family is built from the same research and technology stack behind Gemini 3, but repackaged into open-weight models focused on efficiency, local deployment, and broad developer accessibility.

The release is notable not only for the models themselves, but for how broadly they are being distributed from day one. Google’s own documentation points developers to Google AI Studio for testing larger variants, while Hugging Face’s launch post emphasizes immediate support across the broader open-source stack, including transformers, llama.cpp, MLX, transformers.js, WebGPU, Rust tooling, and fine-tuning libraries. Ollama, meanwhile, has already packaged Gemma 4 into ready-to-run local variants, underscoring Google’s effort to meet developers where they already work.

Key Facts / Comparison

| Model | Architecture | Context Window | Modalities | Best-Fit Deployment | Notable Detail |

|---|---|---|---|---|---|

| Gemma 4 E2B | Dense, “effective” small model | 128K | Text, Image, Audio | Phones, edge devices, local apps | Designed for compute and memory efficiency |

| Gemma 4 E4B | Dense, “effective” small model | 128K | Text, Image, Audio | Edge systems, laptops, lightweight local inference | Audio-capable small model with stronger headroom |

| Gemma 4 26B A4B | Mixture of Experts | 256K | Text, Image | Consumer GPUs, workstations, local agents | 25.2B total parameters, but only 3.8B active at inference |

| Gemma 4 31B | Dense | 256K | Text, Image | High-end local workstations, research, fine-tuning | Highest-quality model in the family |

Benchmark Snapshot

| Benchmark | 31B | 26B A4B | E4B | E2B |

|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 60.0% |

| AIME 2026 (no tools) | 89.2% | 88.3% | 42.5% | 37.5% |

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 43.4% |

| MMMU Pro | 76.9% | 73.8% | 52.6% | 44.2% |

Background and Context

Gemma has become Google’s most visible answer to the surge in demand for open models that developers can download, adapt, and run outside a tightly controlled hosted API. That matters because the market has shifted: many teams still want cloud-hosted frontier models, but they also increasingly want smaller, inspectable systems for privacy-sensitive workloads, offline use, and cost control.

Google’s own framing makes that strategy explicit. The company says Gemma 4 complements Gemini rather than replacing it, giving developers a split stack: hosted frontier systems on one side, portable open-weight models on the other. That is a practical response to how AI software is being built in 2026, with some tasks moving toward local-first assistants, edge inference, and hybrid agent architectures.

The scale of the ecosystem also gives Gemma 4 extra weight. Google says the broader Gemma family has passed 400 million downloads and inspired more than 100,000 variants. That does not automatically guarantee leadership, but it does mean Gemma is no longer a side project. It is a platform bid.

Why This Matters

For developers, Gemma 4 is important because it tries to lower the barrier between experimentation and deployment.

Instead of forcing teams to start in the cloud and stay there, Google is offering a ladder:

- Edge models for offline and near-zero-latency use cases.

- Workstation models for coding, local agents, and multimodal reasoning.

- Open ecosystem support so teams can plug Gemma 4 into existing tools rather than rebuild around a single vendor stack.

For enterprises and public-sector buyers, the appeal is different. Open weights, long context windows, and local deployment options create a more credible path for controlled environments where data residency, auditability, or disconnected operation matter.

For Google itself, the release helps defend relevance in the open-model conversation. Inference from the company’s own positioning suggests Gemma 4 is meant to strengthen Google’s presence across both proprietary and open AI workflows, rather than leave the local and open ecosystem to rivals.

Insight and Industry Analysis

The most interesting part of Gemma 4 is not just its benchmark performance. It is Google’s packaging strategy.

The company is effectively acknowledging that one giant model does not solve every deployment problem. Mobile devices want efficiency and battery discipline. Developers want local coding help without always paying API costs. Researchers want open weights they can fine-tune. Browser and JavaScript communities want models that can reach WebGPU. By launching the same family across those lanes at once, Google is treating open models less like a research giveaway and more like product infrastructure.

That is also why the Hugging Face and Ollama pieces matter. A model release in 2026 is not only about raw scores; it is about day-one usability. Support in transformers, llama.cpp, MLX, Rust tooling, and local model runners shortens the distance between announcement day and real adoption. In practice, that may matter more than a few benchmark points.

Still, Gemma 4’s strategy also reveals a tension in the open-model market. Smaller edge models are increasingly multimodal and efficient, but the heaviest reasoning gains still sit in larger variants that need stronger hardware or aggressive quantization. That means the phrase “runs locally” can describe very different user experiences depending on the model, the hardware, and the inference stack.

Pros and Cons

Pros

- Apache 2.0 licensing: easier commercial adoption and fewer legal barriers.

- Broad deployment story: works across cloud tests, local servers, laptops, edge devices, and browser-adjacent tooling.

- Long-context support: 128K and 256K windows make large documents, code repositories, and multimodal tasks more practical.

- Multimodal design: image understanding is built across the family, while audio support arrives on smaller edge-focused models.

- Open-source ecosystem readiness: strong day-one support reduces integration friction.

Cons

- Uneven modality support: audio is not available on the larger 26B and 31B models.

- “Local” can still be demanding: the biggest models remain best suited to stronger GPUs or optimized runtimes.

- Benchmark interpretation requires care: performance depends on model variant, “thinking” mode, and inference setup.

- Product complexity: four core sizes and multiple deployment paths may be powerful, but they also make choice harder for less technical teams.

Technical Deep Dive

Gemma 4 is not just a scale-up release. It adds several architectural ideas meant to improve efficiency and make long-context multimodal inference more practical.

Architecture Highlights

| Component | What Google / ecosystem docs describe | Why it matters |

|---|---|---|

| Hybrid attention | Alternates sliding-window attention with full global attention | Balances memory efficiency with long-context awareness |

| Proportional RoPE | Used on global layers for longer context handling | Helps extend context without a simple brute-force scale-up |

| Per-Layer Embeddings (PLE) | Smaller models add layer-specific embedding signals | Improves parameter efficiency for edge deployments |

| Shared KV Cache | Later layers can reuse key-value states from earlier layers | Cuts inference compute and memory overhead |

| Vision encoder upgrades | Variable aspect ratios and configurable image token budgets | Better fit for documents, UI, charts, OCR, and varied image layouts |

| Audio encoder on small models | USM-style conformer-based encoder | Enables speech and audio input on E2B/E4B |

Capabilities Called Out by the Documentation

- Reasoning / “thinking” mode for step-by-step problem solving.

- Function calling and structured JSON output for agentic workflows.

- Native system prompt support for more controlled interactions.

- Image understanding for OCR, charts, documents, screens, and handwriting.

- Video understanding through frame-sequence processing.

- Multilingual coverage with pretraining across more than 140 languages.

Deployment Notes

Google says the smaller E2B and E4B models are aimed at mobile and edge scenarios, while the 26B A4B MoE and 31B Dense variants target workstations and consumer GPUs. The Hugging Face launch notes support in transformers, llama.cpp, MLX, transformers.js, WebGPU, and other runtimes, while Ollama’s library shows immediately downloadable local tags for E2B, E4B, 26B, and 31B variants.

That deployment breadth is one of Gemma 4’s most practical technical strengths. A capable model is useful; a capable model that arrives already wired into the tools developers actually use is much more valuable.

What to Watch Next

- Whether independent testing confirms Google’s efficiency claims across consumer hardware.

- How quickly the community builds specialized Gemma 4 fine-tunes for coding, local agents, and enterprise workflows.

- Whether browser-based and on-device demos mature into production apps rather than tech showcases.

- How Gemma 4’s open adoption compares with competing open-model ecosystems over the next few months.

- Whether Google expands the lineup further with additional quantized, cloud-hosted, or domain-specialized variants.

Conclusion

Gemma 4 is a meaningful release not because it tries to be one universal model, but because it accepts that AI is now deployed across many environments at once. Google is betting that open models win when they are not only strong on benchmarks, but also easy to run, adapt, and ship.

If Gemma 4 delivers on that promise in real-world developer workflows, it could become one of the more consequential open-model launches of 2026: not merely a research milestone, but a practical bridge between frontier AI and everyday hardware.

References

- https://deepmind.google/models/gemma/gemma-4/

- https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/

- https://ai.google.dev/gemma/docs/core/model_card_4

- https://huggingface.co/blog/gemma4

- https://ollama.com/library/gemma4